Jenkins war story - Agents keep disconnecting

Today I would like to share an issue that stumped a number of our engineers over the last couple of days.

We had just swapped our production Jenkins over to a new Kubernetes cluster, this was tested on the day and all seemed fine. The old cluster was stopped and left around in case of issues later.

The next day we got a number of support requests from other engineers that their builds were stuck and the build agent was disconnected.

Having a quick look we could see

Cannot contact cloud-ubuntu587aa2: hudson.remoting.ChannelClosedException: Channel "hudson.remoting.Channel@38da1aa3:cloud-ubuntu587aa2": Remote call on cloud-ubuntu587aa2 failed. The channel is closing down or has closed down

ERROR: Issue with creating launcher for agent cloud-ubuntu587aa2. The agent has not been fully initialized yet

cloud-ubuntu587aa2 was marked offline: Connection was broken: java.io.EOFException

23:35:53 at java.base/java.io.ObjectInputStream$PeekInputStream.readFully(ObjectInputStream.java:2872)

23:35:53 at java.base/java.io.ObjectInputStream$BlockDataInputStream.readShort(ObjectInputStream.java:3367)I've been using Jenkins for close to 9 years now and that's always a dreaded exception to see. If you google around the best reference I was able to find was from Jeff Thompson (a maintainer of the Jenkins remoting library):

there are also a number of cases where people have reliability problems for a wide variety of reasons. Sometimes they're able to stabilize or strengthen their environment and these problems disappear.

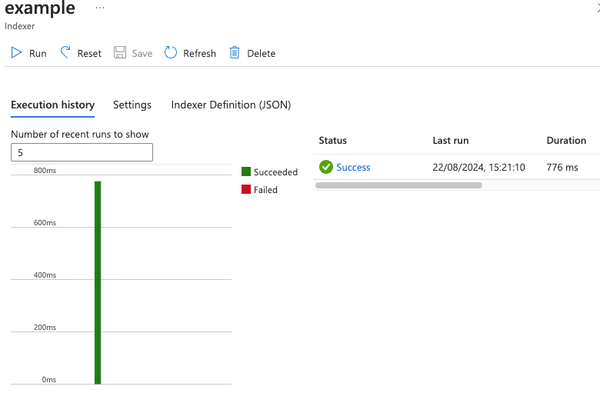

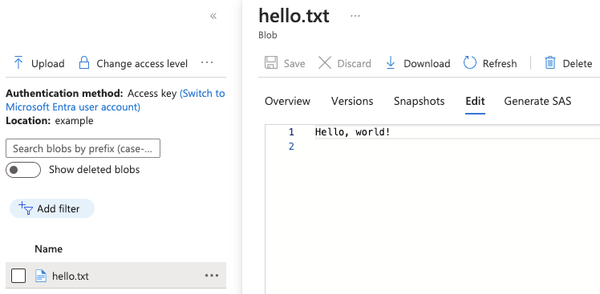

Okay, well then we started tracking back what could have changed. Luckily for us we build our entire Jenkins instance with the Configuration as Code plugin. We were able to quickly answer questions about what changed by looking at our Git commits and then verifying in the Azure portal (just in case). The only configuration that was changed between Jenkins instances was the Virtual Network that it was running in and the Kubernetes cluster (which was still the same version).

Perplexing, okay it could be an Azure issue, maybe we'll reach out to them, but let's keep digging for now.

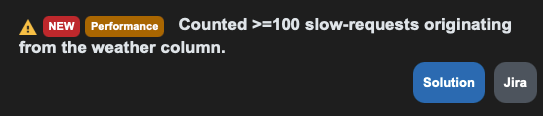

We tried out the Health Advisor by CloudBees, we got a nice report telling us that the weather column was causing some slow requests.

Okay, sure... Well the fix was pretty trivial to apply and looked harmless. We ran the script in dry run first to check what it would do, lots of folders showed up that would have it removed, we disabled try run and let it do its thing.

A few minutes later another agents goes offline, not surprising that this was unrelated, but hey maybe our instance will be faster after this.

Back to our favourite search engine we go. I found an issue from back in 2019 JENKINS-56535 where someone reported:

We fought a little and found the issue. We spun up a test-jenkins from a backup of our production jenkins server. Both server had same ID and somehow after aprox two hours of an agent creation, the agents created by one server were being removed by other server.

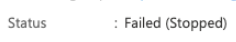

I asked other members of the team what happened to the old cluster, and was told that it had been turned off. I double checked this and found:

The Kubernetes cluster had failed to stop on the night, I logged into it and all pods and nodes were still running. Including Jenkins...

The Azure VM Agents plugin we use for provisioning agents runs a background task that periodically cleans up orphaned resources based on a tag that it adds to the cloud resources which is the Jenkins 'instance id'. This shouldn't end up deleting other agents even if you use the same resource group.

The problem was: when Jenkins was moved to the new cluster, a backup of it's disk was taken and migrated to the new disk, so we had two Jenkins servers running with the same instance ID. Uh oh.

Well time to test all this out, I deleted the Jenkins deployment, deleted all of our 'disconnected' agents. Monitored it for an hour or so (previously every few minutes we would lose an agent) and declared it a success.

Lesson learnt.

- Be very careful running multiple Jenkins instances with the same ID.

- Always check the service is actually turned off